We are approaching the end of the first semester in our school and this is typically a time when we review our assessments, give out our grammar and vocabulary tests and write all the reports. Like many schools, our reports contain the categories: Grammar & Vocabulary, Listening, Reading, Speaking, Writing. The students do three assessments in reading, listening and writing that are spread out over the semester and then a larger grammar and vocabulary test at the end of the semester. The marks for each component get converted to a score out of twenty and the scores for all five components are added together to give a percentage, which is the student’s final grade.

The eagle-eyed amongst you may have spotted the problem here.

Assessing speaking is always difficult. One of the biggest problems I always find is whether I am actually assessing their speaking or whether I am assessing their spoken production of grammar and vocabulary. To what extent does personality play a part in this? Susan Cain’s TED talk on “The Power of Introverts” reminds us that just because people aren’t saying something, doesn’t mean they can’t.

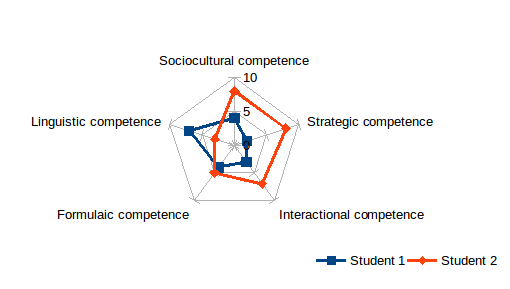

Rob Szabo and Pete Rutherford recently wrote an article arguing for a more nuanced approach. In “Radar charts and communicative competence“, they argue that as communicative competence is a composite of many different aspects, no student can simply be described as being good or bad at speaking, but that they have strengths and weaknesses within speaking.

Szabo and Rutherford identify six aspects of communicative competence (from Celce-Murcia) and diagram them as follows:

This is an enticing idea. It builds up a much broader picture of speaking ability than what is often taken – a general, global impression of the student. It also allows both the teacher and the student to focus on particular areas for improvement: in the diagram above, student 1 needs to develop their language system, it isn’t actually “speaking” that they have the problem with. Equally student 2 needs to build better coping strategies for when they don’t understand or when someone doesn’t understand them. These things aren’t necessarily quick fixes, but do allow for a much clearer focus in class input and feedback than just giving students more discussion practice.

From a business perspective, which is mostly where Szabo & Rutherford’s interests lie, there is also added value here for the employer or other stakeholders. One of the points that David Graddol was making at the 2014 IATEFL conference (click here for video of the session) was language ability rests on so many different dimensions that in certain areas (Graddol mentioned India as an example) employers may well need someone with C1 level speaking ability, but it doesn’t matter whether they can read or write beyond A2. Graddol kept his differentiation within the bounds of the CEFR and across abilities; Szabo and Rutherford take a more micro-level approach and suggest that the level of analysis they suggest may well be useful to employers in assigning tasks and responsibilities. Quite what the students may feel about that is another matter.

Whilst this idea has been developed in a business English context, it is a useful idea that should also make the leap from the specialist to the general, as it has clear applications in a number of areas. In reviewing the different competences, there are cross overs to the assessment categories used in Cambridge Exams for example – where discourse management, interactive communication, pronunciation and Grammar & Vocabulary have clear corollaries. Diagramming pre-exam performance in this way again, can help teachers and students have a clearer picture of what needs doing and can make instruction more effective.

A helpful next step for the authors might be to think about how this idea can translate into practice in the wider world. Currently, it seems as though a mark out of ten is awarded for each competence and while this inevitably gets the teacher thinking in more detail about what exactly their student can or can’t do, no definitions are currently provided as to what a “10” or a “3” might mean.

Monday 26 January 2015 at 19:48

Hi David. Thanks for posting this. I’d just like to add that we have been tinkering with the idea lately and we invite criticism from all stakeholders in the teaching, testing and indeed, learning, game.

Here’s a column we wrote along with Gareth Humphrey, the Director of Studies of Marcus Evans Linguarama, a major business English training provider in Germany. Gareth points out some of the weaknesses and difficulties associated with our model.

http://www.peterutherford.de/2015/01/conversations-about-communicative.html?m=1